My topic for today: All numbers are wrong.

Like, seriously. Whenever you see a number – in a tweet, newspaper headline, office email, technical report, textbook, anywhere – assume it is wrong. Treat it as enemy misinformation, deliberate sabotage of your understanding of the world, and disregard it.

You're thinking, ha ha, I'm exaggerating for effect. I’m not. Seriously I am not. I mean, of course not not all numbers are literally incorrect; but it happens so very, very much more often than your intuition, that I do literally mean it is a good practice to treat all numbers as incorrect by default.

I’ve come to this position slowly, over the years, one screwup at a time. But now that I’ve really started paying attention, the mistakes are everywhere.

(If you’re wondering what this has to do with climate, stay tuned; I’ll be getting into this in later posts.)

It’s So Easy To Find Examples

The day I sat down to write this post, I skimmed the New York Times headlines for examples. I didn’t have to look far:

“Half a million known virus cases”, huh? During the Omicron peak, the US alone exceeded that many cases every day. Clearly they meant half a billion.

Think about this. The New York Times, in a prominent location – a headline! – used a number that was off by a factor of one thousand. One thousand. Utterly, colossally, absurdly incorrect.

How could that happen? Is their research department using a flawed methodology? Did they fall victim to a misinformation campaign? Did someone accidentally post a headline from way back in early 2020? Were they hacked?

Of course not. Obviously, it’s just a typo. No big deal. But the fact that a factor-of-1000 error is no big deal, is a big deal. It undermines the very idea that numbers mean anything. It rubs our nose in the fact that an incorrect number looks exactly like a correct number. In this case, the number is so wildly incorrect as to reveal itself on casual inspection. But most mistakes aren’t so obvious; and even this “obvious” mistake made it to the front page.

Imagine you’re in line at a restaurant buffet, and you see an employee drop a piece of meat. They pick it up from the floor, brush off the dirt, and plop it into the serving platter. Obviously you’re not going to eat it. Are you going to carefully choose a different piece of meat? Or, having seen their standard of hygiene, are you going to leave that restaurant and never come back? You wouldn’t put that food into your mouth; and you just saw a mainstream news source do the metaphorical equivalent of serving meat that had been dropped on the floor. Don’t put these facts into your mind.

Even When A Number Is Right, It’s Wrong

Mixing up "million” with “billion” isn’t even the worst part about that Covid statistic. It’s a big error, but it’s a one-off. The deeper problem is the phrase “most likely an undercount”. It is not likely an undercount, it is certainly a small fraction of the true value. The statistics the Times is citing – the statistics everyone always cites – are based on officially reported cases. If someone takes a home test instead of a lab PCR test, or if they never bother to get tested at all, or if they live in a part of the world where lab tests are unavailable, or if they have a mild case and don’t even know they’re sick – none of those infections are counted.

I couldn’t easily find an up-to-date estimate of total worldwide infections, but this article from way back in October 2020 cites a World Health Organization estimate of around 760 million. At the time, the number of reported cases was only 35 million. Or the CDC estimates that through September 2021 – i.e. before Omicron – 146 million Americans were infected. By now, well over half of the country must have had the virus. I imagine the same applies in most of the world, China (though this may be about to change) being the one large exception. “Half a billion” is completely disconnected from the actual infection count.

At least the Times specified “known” cases. Often, that distinction isn’t even mentioned. But anyone reading the headline is going to gloss over the word “known”. They’re liable to mentally compare half a billion cases with the world population of around 8 billion, and conclude that roughly one person in 16 has had Covid – when the truth is more like in two.

So saying that 500,000,000 worldwide Covid cases is most likely an undercount, is like saying “most human beings live at least seven years, possibly longer”. Technically, it’s a true statement; in practice, it anchors your mind on a number that means something so different than what you think as to basically be a lie.

Errors, Errors Everywhere

The same day, in the same skim of headlines, the Times also provided this beauty:

The body of the article includes a slightly different phrasing: “Russia and Ukraine together export more than a quarter of the world’s wheat”. In actual truth, according to the best data I could find (link, link) Russia and Ukraine together export about 6% of world wheat production – less than a quarter of the figure provided by the Times. What the Times appears to have meant is that wheat exports from these two countries are close to 30% of global wheat exports. Most wheat is consumed in the country in which it is grown, so “wheat production” and “wheat exports” are very different. Incidentally, none of these figures tell us Russia and Ukraine’s share of wheat production; that’s about 15%.

It is certainly true that the invasion of Ukraine will cause serious disruptions to wheat supply for certain countries. But the idea that the world is going to have to make do with 25% less wheat is way off. In fact, this Twitter thread suggests that there will be essentially no net shortfall in the world’s wheat supply. I don’t know whether the analysis is correct, and there are parts I don’t understand, but it’s circulating widely and I have not seen a rebuttal. One point the writer makes is that wheat markets saw trouble brewing well in advance of the actual invasion, and as a result many large producers – notably India and the United States – planted more wheat than usual this season. Again, this doesn’t mean there won’t be disruption; but it will mostly be a question of getting wheat where it’s needed, not an actual global shortfall.

The takeaway, once again, is that numbers are often misinterpreted (share of world wheat production vs. share of exports vs. exports as a fraction of production) in a way that renders them wildly incorrect.

Serious Professionals Get It Wrong All The Time

It’s not just a newspaper thing. I constantly encounter misleading or incorrect numbers in my professional life. Important, consequential numbers. Just the other day, a manager posted a worried message on our internal Slack channel at work: server costs for one of the systems we operate had jumped $100,000 in a single month; something had to be done immediately!

The “every number is wrong” light immediately went off in my head: no way this was correct. And indeed, after a bit of poking around, I found:

Costs had actually jumped by $63,000. The $100,000 was a misreading of the graph (this happens, I do it myself all the time).

That was still 28% month-over-month growth. But this was the figure for March, and March has 11% more days than February! That explains almost half of the increase right there.

Customer usage of the system in question had increased 9%, and we expect cost to go up with usage.

Net of all that, the “unexpected” increase was only about $16,000, not $100,000.

I should note that the manager in question is a great guy, someone we’re fortunate to have on our team. The moral of the story is not that he goofed; everyone goofs. The moral is that numbers have a way of amplifying every little mistake.

Other actual examples:

Calculations being way off because they were based on “active users”, but one person measured “one-day active” (customers who have used the system in the last day), another used “30-day active” (have used the system in the last 30 days), and they both just called them “active users”.

I can’t count the number of times I’ve seen people get data size requirements off by a factor of ten or more, because they mix up uncompressed and compressed files.

My all-time favorite: back when I worked at Google, I saw two talented engineers reject an engineering design because they thought it would require more hard disks than Google owned. Even back in 2008, that was a heck of a lot of disks, so I asked them to walk me through their math. It turned out that they had mixed up “activity per second” with “activity per day”, putting the analysis off by a factor of 86,400.

And I can’t help but end with the infamous incident where NASA lost a Mars probe because someone mixed up English and metric units. This is a real thing.

We Treat Numbers As A Shortcut To Understanding

Numbers are powerful tools. In a few short digits, we can summarize the entire history of the Covid pandemic. But that leverage cuts both ways. Just because a number can encapsulate a complex system, doesn’t mean it gives you understanding of that system. And when you lack understanding, you have no way of noticing when mistakes inevitably creep in.

To understand something, you need to put in effort. You need to view it from multiple angles, work through examples, dive into details. It is only then that you can hope to safely summarize the system in a handful of numbers. A number is not a shortcut to understanding; it is a tool for those who have already achieved it.

I spotted the million / billion and known cases / total cases errors because I have a good mental model of the pandemic, acquired through extensive reading. I knew about the wheat-exports error because I read a Twitter thread where that exact error was pointed out by someone who knows wheat markets. I spotted the errors at work because I was an expert on the systems in question. Who knows what errors I’m not noticing?

How To Handle Numbers Safely

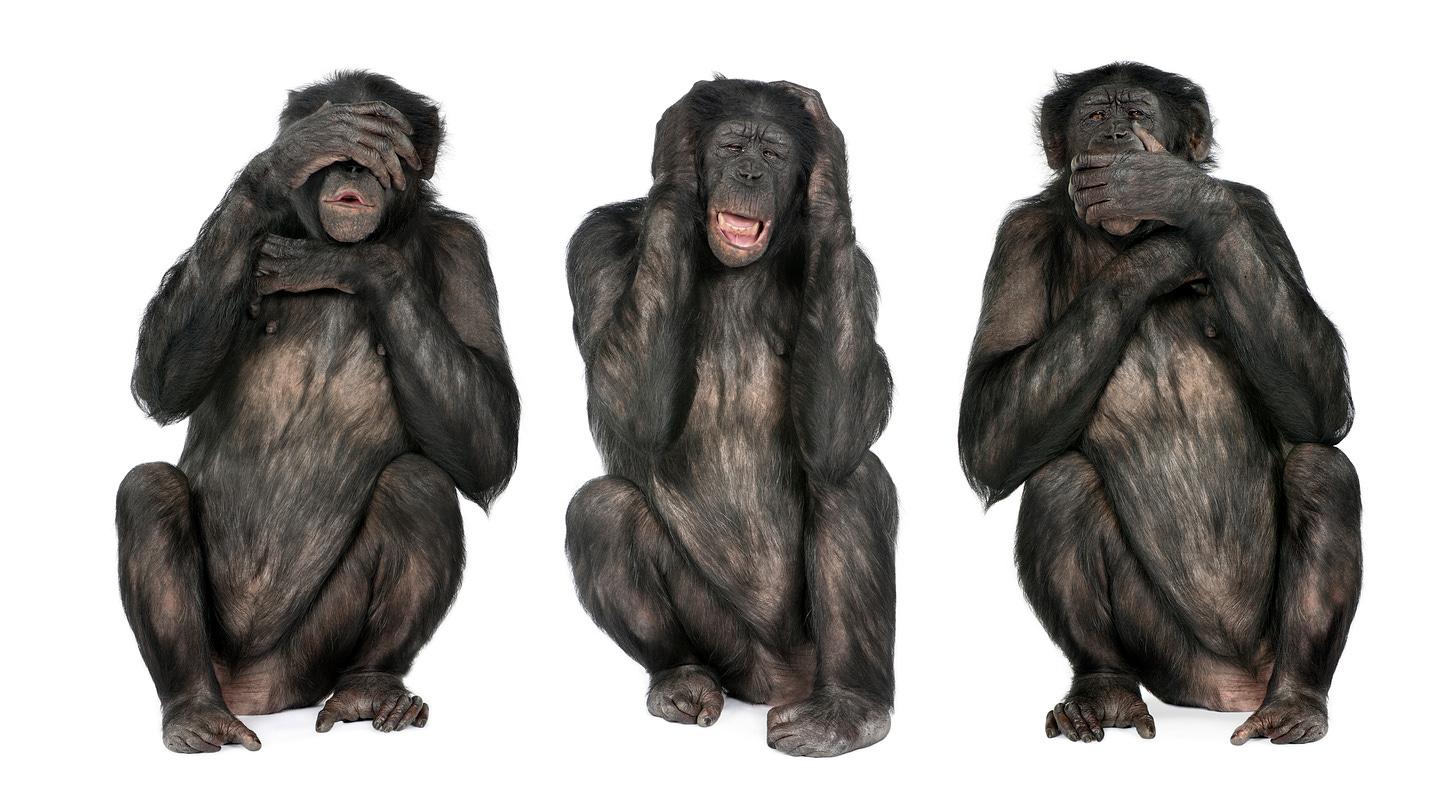

The first rule of numbers is to distrust them by default. Apply a little blur filter in your head – pretend the numbers aren’t there:

This headline is obviously telling us that cases have continued to increase, and that’s the only part we should consider even potentially trustworthy. When you must rely on numeric information, you’ll need to put in work. Do enough reading to understand the system you’re analyzing at more than just a glossy surface level. Apply reality checks – do the numbers make sense? Do they fit with your understanding of the world?

Come at the problem from multiple directions, and make sure you get consistent answers.

Make sure you understand exactly what a number is intended to represent: units, scope (total cases, or known cases? total production, or exports?), and other context. Be a complete pain about this, make sure everything is spelled out explicitly.

Keep a sharp eye out for weasel words like “known cases” or “the death toll may increase as bodies are discovered”; these can conceal huge understatements.

It’s somewhat safer to compare numbers that are computed on a consistent basis using consistent methods. For instance, if you read that wheat production increased 3% in 2021 compared to 2020, that’s relatively likely to be correct. You never know! Perhaps a country dropped out of the reporting system, or there was an error in one year’s figures. But the odds are better.

On the other hand, comparing numbers from different sources – for instance, wheat production from one source with wheat exports from another source – is especially fraught.

But most important of all: never allow a number to fool you into thinking you understand something. There is no shortcut to understanding.

Further, number are opinionated! Someone gathered the numbers for some particular purpose, and the number reflects the purpose for which they were gathered. This is something that the Machine Learning people are continuously rediscovering.

`Keep a sharp eye out for weasel words like “known cases” or “the death toll may increase as bodies are discovered”; these can conceal huge understatements.`

This reminds me of the fight I had with my friend during the initial stages of covid-19 ( Yes, we spent our time fighting about things which we don't have control over. ). In the initial stages of Covid, as we all know the number of resolved cases was a tiny fraction of the total number of cases ( active + resolved ). One fine day, the guy mentioned that the mortality rate for our country was very low ( India got too many cases in a few weeks ). I couldn't help but notice that mortality rate was calculated as total number of deaths / total cases, and I kept saying to him that the number is meaningless if the total number of cases are not comparable to the total number of resolved cases. People who caught covid didnt die immediately. Those who died, died some days later, and when the numbers are increasing exponentially, this difference was crucial. I saw that after the numbers settled, people were kinda alarmed that the number was increasing again from the low.

I still cant understand how WHO was using that metric, when the pandemic was exploding day by day.